|

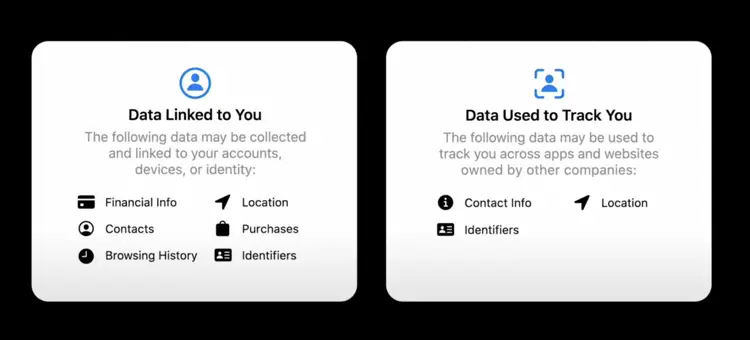

When the threshold is crossed, an Apple employee will review the content manually and make relevant referrals to the NCMEC, who works with law enforcement agencies. When a photo is identified as matching a NCMEC database hash, it will be uploaded along with a “cryptographic safety voucher that encodes the match result along with additional encrypted data about the image.” PrivacyĪpple claims it protects user privacy through a technology called “threshold secret sharing,” which “ensures the contents of the safety vouchers cannot be interpreted by Apple unless the iCloud Photos account crosses a threshold of known CSAM content.”

The root of the controversy is in the third prong of the protections for children, which will implement “new applications of cryptography to help limit the spread of CSAM online.” Apple says “CSAM detection will help Apple provide valuable information to law enforcement on collections of CSAM in iCloud Photos.”Īpple will use a cryptographic system that creates a “hash” of known CSAM material from the National Center for Missing and Exploited Children’s (NCMEC) database and will check for these hashes on the photos stored on each iOS 15 and iPadOS 15 device automatically before they are uploaded to iCloud Photos. These interventions will explain to users that interest in this topic is harmful and problematic, and provide resources from partners to get help with this issue.” Apple says the apps will be “updated to intervene when users perform searches for queries related to CSAM. The update will also adjust Siri and Search to, “Provide parents and children expanded information and help if they encounter unsafe situations” in addition to blocking “child sexual abuse material” (CSAM). The child will be warned before the photo is sent, and the parents can receive a message if the child chooses to send it.” ‘Updated to intervene’ Similar protections are available if a child attempts to send sexually explicit photos. “As an additional precaution, the child can also be told that, to make sure they are safe, their parents will get a message if they do view it. In a description of how the function works, Apple says when an account marked as a child is sent a sexually explicit photo, “The photo will be blurred and the child will be warned, presented with helpful resources, and reassured it is okay if they do not want to view this photo.”

The first is “new communications tools” to alert parents when their children view potentially suggestive content using “on-device machine learning” and will keep “private communications unreadable by Apple.” In an update to the Apple website titled Expanded Protections for Children, the world’s most valuable company by market capitalization said it would deploy a series of three protections. The scheme will implement a cryptographic hashing method that functions in a very similar way to a recently announced plan by a consortium of Big Tech social media companies such as Facebook, Microsoft, YouTube, and Google to automatically crack down on “white supremacy.”

Big Tech cartel crown jewel Apple announced plans to automatically surveil all devices for known child pornography images in the upcoming iOS 15 and iPadOS 15 upgrades.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed